This document provides instructions to replicate our Windows DLL builds.

Requirements:

- Windows 11 (x64)

- Build Tools for Visual Studio 2022

- MSYS2

- CUDA 12.9.1

- AMD HIP SDK 7.1.1

- Vulkan SDK 1.4.341.1

In the MSYS shell

As our Makefile makes massive use of unix shell applications, it's much easier to just replicate that environment. We'll need it just to setup the repo (with make setup), which initializes all the submodules and applies our patches to the original code. In theory after the setup you should also be able to run make -j8 to build llamafile with cosmocc on Windows (but honestly, why?)

-

install the required tools (vim is not really required)

-

create a build workspace

-

clone the repo

-

setup

In the Windows terminal

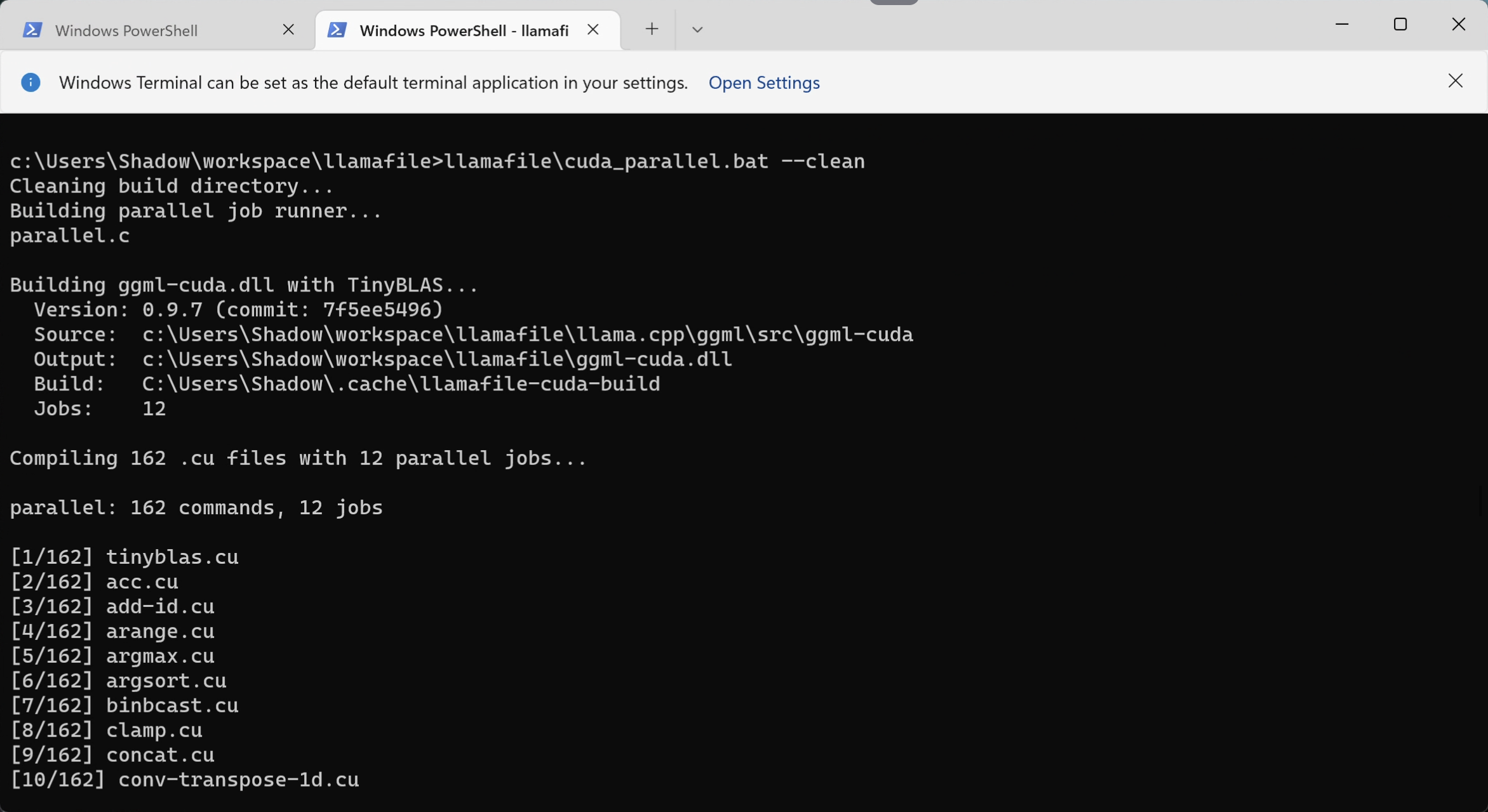

After the repo is set up, you can build the cuda / rocm / vulkan DLLs as follows.

-

from powershell, open a Visual Studio 2022 Developer Command Prompt

-

cd to the llamafile dir and start CUDA parallel build (this will run for a while...)

-

run ROCm parallel build as follows:

-

run Vulkan build as follows (parallel is not needed, this is usually much faster than the other two):

At the end of this process, you should have the following libraries available in your llamafile directory (note that sizes might differ):

03/31/2026 02:15 PM 717,095,936 ggml-cuda.dll

03/31/2026 02:44 PM 502,854,656 ggml-rocm.dll

03/31/2026 02:46 PM 31,482,880 ggml-vulkan.dll

To run llamafile with these libraries, add them in your home directory or bundle them in your llamafile (see Creating a llamafile).